IRL Pokédex

Handheld Pokédex that runs a custom CNN + TTS stack on-device to test edge inference and labeling workflows.

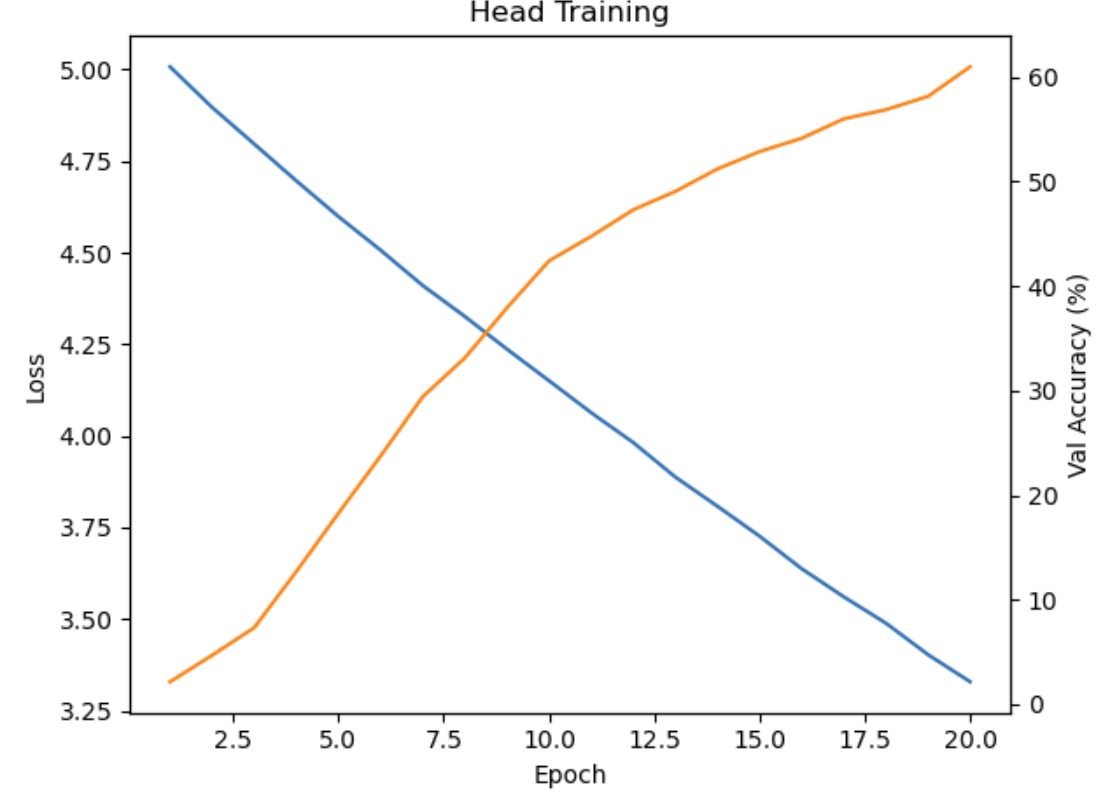

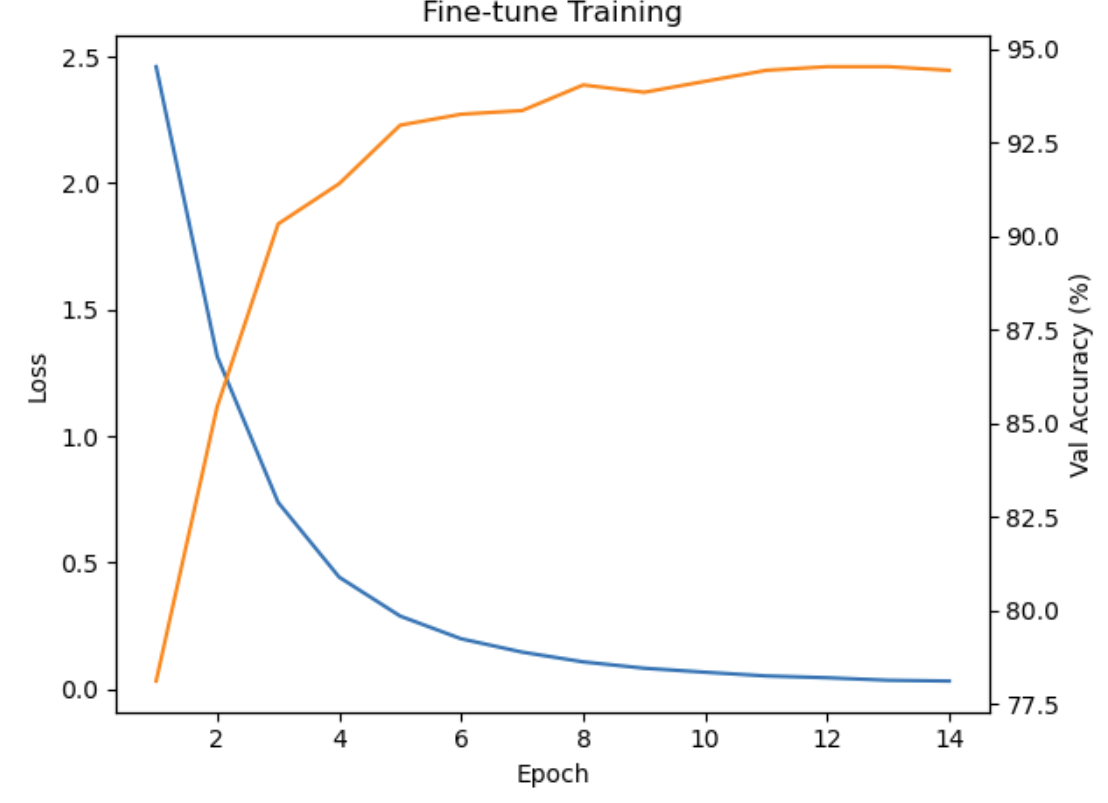

Collecting + fine-tuning

Started with head-on captures to seed the first dataset, then folded each batch into a fast labeling + fine-tune loop.

Each batch moves through a lightweight labeling tool that tracks per-class balance and highlights confusing pairs (like Pikachu vs Raichu). The next run regenerates a balanced split and exports an ONNX model in minutes.

- Quantized CNN fine-tuned on self-shot photos.

- Exports ONNX for the Pi; no cloud calls.

- Fresh captures automatically expand the next dataset.

On-device inference + TTS

Frames stream into a short history buffer; the moving average gates speech so the voice stays steady. A local TTS model with a custom lexicon pronounces names correctly even when offline.

- Denoise + resize before inference keeps predictions stable in low light.

- Speech triggers only after the confidence buffer clears a threshold.

- Top class and confidence render on-screen for quick debugging.

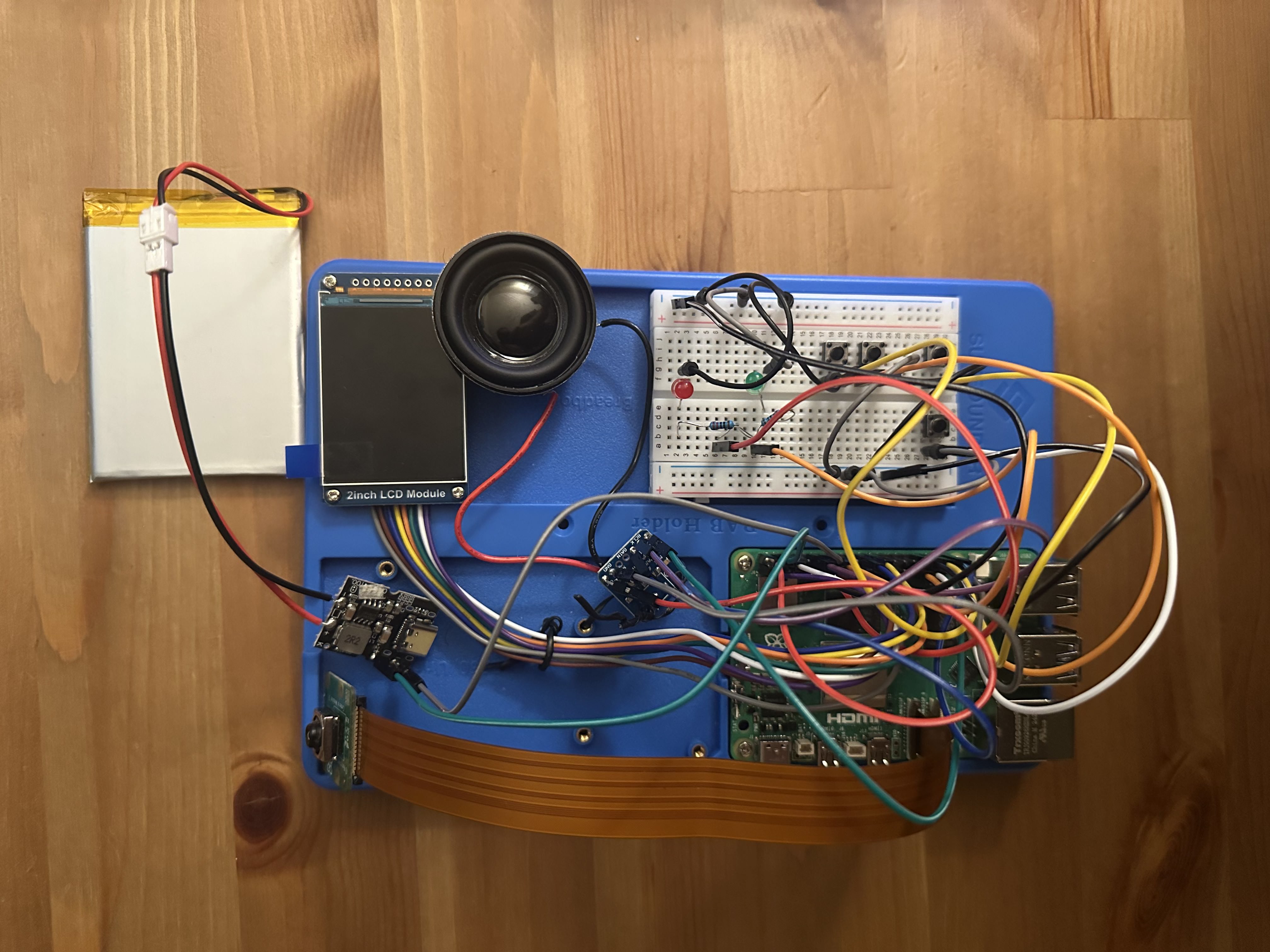

Hardware build

Stack

Pi 4 + USB camera; ONNX Runtime for the quantized CNN; custom lexicon bundled with the offline TTS voice.

Why buttons

Physical capture + playback keep the loop usable when the UI is off or the device is running headless.

Live capture

On-device audio + video